Abstract

We present SNH-SLAM, a novel expandable dense neural simultaneous localization and mapping (SLAM) method that constructs a neural field in real-time based on run-time observation. To reach this challenging goal without any scene prior, we utilize instant depth supervision to drive the extension of planar convex hulls, where a single hash table maintains multi-level feature units embedded in the planar convex hulls. This design facilitates high-fidelity, hole-free, and low-memory map reconstruction while adding only a tiny time burden to the training process.

Our approach performs mapping by minimizing both RGBD-based re-rendering loss and Truncated Signed Distance Field (TSDF) loss. In addition, for camera tracking, our optimization strategy allows SNH-SLAM to converge faster on the pose estimation and maintain robustness.

We evaluate our method on common benchmarks and compare it with existing dense neural RGB-D SLAM methods. The evaluation results show the competitiveness of the SNH-SLAM in tracking accuracy, reconstruction quality, memory usage, and frame processing speed.

Method

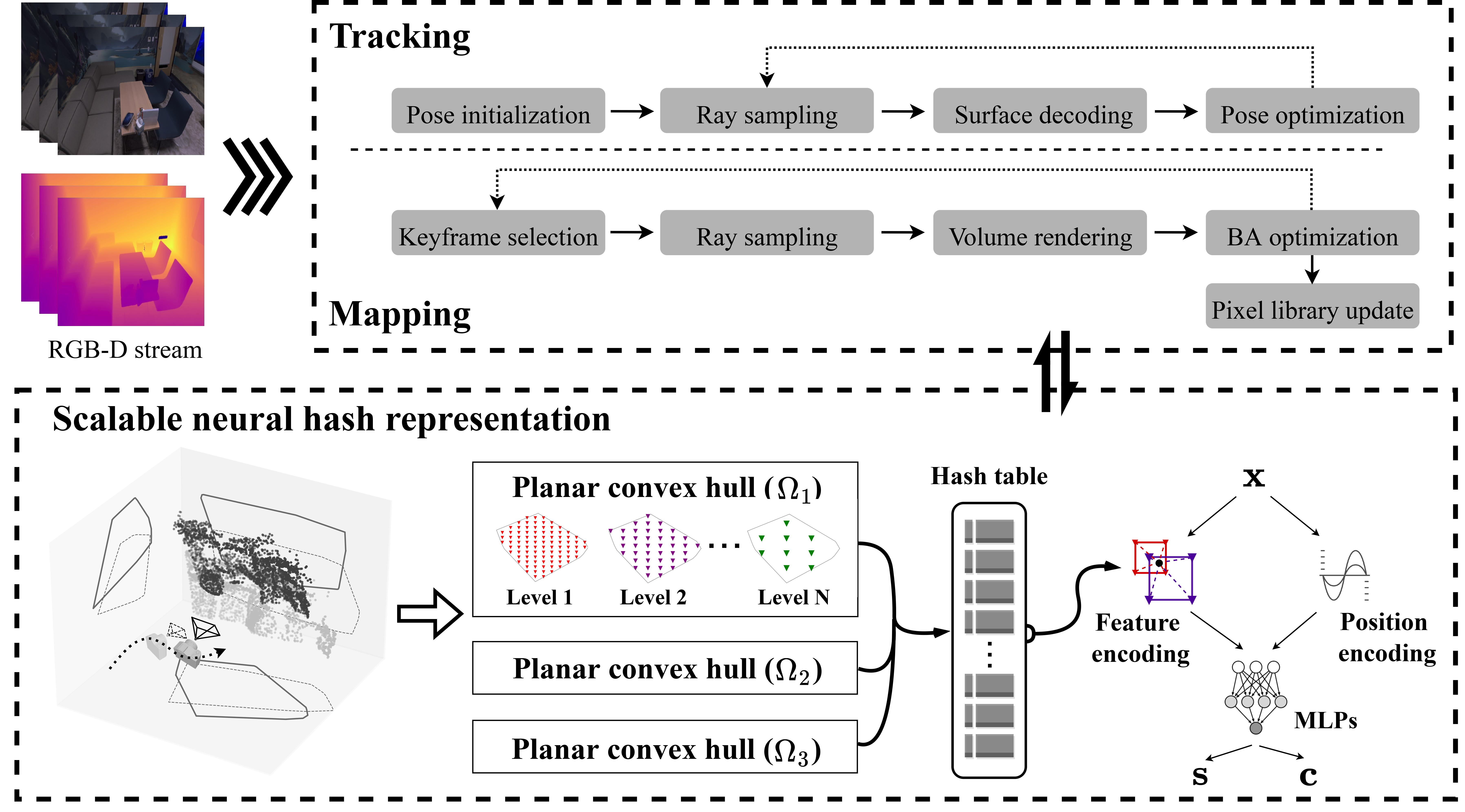

System Overview. 1) Scalable neural hash representation : for each mapping frame, the multi-scale grid features are partitioned based on perceptual convex hulls and inserted into a hash table. While decoding the RGB and TSDF values for a given spatial coordinate using MLPs, the input feature vector is obtained by joint feature coding and position coding. 2) Tacking : keep the map fixed and sample rays only on the current frame to optimize the camera pose. 3) Mapping : Sample rays from selected global keyframes and jointly optimize the scene representation along with the poses of these frames through bundle adjustment.